With Franziska Sofia Hafner

Schwartzman Centre

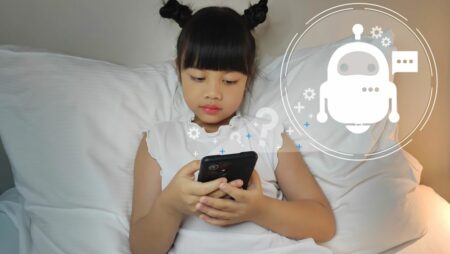

A new study from Oxford Internet Institute researchers finds that training chatbots to sound warmer makes them up to 30% less accurate, and 40% more likely to validate users' false beliefs.

Sofia is a DPhil student in Social Data Science at the OII. Her research focuses on algorithmic fairness, machine learning, and interactive data visualisation. Sofia’s research on algorithmic fairness has been published in academic journals and conference proceedings, including AI & Society and ACM FAccT.

Sofia holds an undergraduate degree in Computer Science and Public Policy from the University of Glasgow and a master’s in Social Data Science from the OII. She has held multiple research positions, including as a visiting scholar in Computer Science at the American University, as an intern at the Urban Big Data Centre, and as a research assistant for the Synthetic Society Lab.

Algorithmic Fairness, Machine Learning, Interactive Data Visualisation, Recommendation Systems

With Franziska Sofia Hafner

Schwartzman Centre

A new study from Oxford Internet Institute researchers finds that training chatbots to sound warmer makes them up to 30% less accurate, and 40% more likely to validate users' false beliefs.

29 April 2026

New Oxford research shows that training chatbots to sound warmer makes them up to 30% less accurate, and 40% more likely to validate users' false beliefs.

25 November 2025

Researchers from the Oxford Internet Institute at the University of Oxford will be at NeurIPS 2025 in San Diego from 1- 7 December, 2025, contributing to one of the world’s leading AI conferences.

20 June 2025

OII researchers are set to attend the Association of Computing Machinery (ACM) Conference on Fairness, Accountability and Transparency (FAccT) 2025.

9 June 2025

The Oxford Internet Institute’s Franziska Sofia Hafner explores whether language models are perpetuating gender stereotypes.

The Verge, 29 April 2026

The researchers found AI chatbots trained to be warmer were significantly more likely to make factual errors and agree with false beliefs than the originals.

The Guardian, 29 April 2026

Chatbots programmed to respond warmly even cast doubts on Apollo moon landings and fate of Hitler, researchers say

Telegraph Online, 29 April 2026

Systems trained to sound friendlier are up to 30 per cent less accurate, study finds