Manuel Schaeffer

“I wish I had,” said Steve Jobs when asked if he had designed the Apple logo as a reverence to the computer pioneer Alan Turing who committed suicide with a poisoned apple. Nevertheless, we can draw a line between the mathematician and contemporary technology companies. Without Turing the development of computer science, the end of World War II and the advances in Artificial Intelligence would have taken much longer.

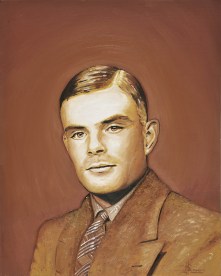

We celebrate Turing’s centenary and although his name is generally unknown to the wider public, he will enter history books and the pantheon of science right next to Newton or Darwin. The celebration of his centenary might accelerate that process and raise awareness for the sleeping giant. But we need to be careful not to create another simplified genius narrative.

In celebration, the Internet is full of dedicated, reminiscent homepages, blogs, articles and other digital votive offerings. A quick Google search of “Turing” yields 16 million results. The online cemetery procession is led by Google’s doodle rebuilding a version of the so called Turing machine. Said machine consists of a strip with numbers allowing computer scientists to simulate algorithms and understand the limits of computation.

Instead of using a humorous or art-like doodle, as Google usually does for anniversaries of scientists, the Turing doodle was a practical riddle that needed to be solved. In six steps the user could interact with the doodle and eventually spell Google in binary code [0,1] – great start to simultaneously scare and excite people.

Why does he deserve to be in the scientific pantheon? Turing was born in 1912 in Britain, finished his studies in Cambridge with First-Class Honours in Mathematics and became a fellow in Cambridge at the age of 22. During the war, he ran the unit in Bletchley park that was responsible for cracking the code of the German Navals. He developed a machinery to decrypt the enigma codes and thus shortened the war by at least several months providing valuable information about German strategies. His former colleague described him in an interview: “The extraordinary thing is that this quiet man was probably the most important man of his time, except possibly Churchill. Turing did not look like a superstar, he was a very modest man, but Turing was the genius who broke Naval Enigma.”

Before and after the war he worked on several scientific papers about computation and laid the foundation for modern computer science. For him, there was always the possibility that “machines can think”, and he concluded one of his papers with the bold prediction: “We may hope that machines will eventually compete with men in all purely intellectual fields.” Algorithms, AI, software – these are words that have become meaningful due to the quiet work of Alan Turing.

After the war, Turing was convicted for homosexuality, which was a crime under British law until the 1960s. Instead of going to prison, Turing chose a chemical castration procedure and was fed with female hormones. Two years after the treatment, at the age of 42, he apparently committed suicide. In fact, his housekeeper found him in his bed with a half-eaten, presumably poisoned, apple. This act is believed to be a symbolical reference to Snow White, a story that was said to have haunted Turing. Although possibly apocryphal, this narrative offers a clever and imaginative end, fitting a clever and imaginative man. As the Italians say, if it is not true then it is at least well told.

Since the chemical castration and his personal tragedy are probably connected, prime minister Gordon Brown publicly stated an apology, following an online petition in 2009:

“While Mr. Turing was dealt with under the law of the time and we can’t put the clock back, his treatment was of course utterly unfair and I am pleased to have the chance to say how deeply sorry I and we all are for what happened to him.”

However, the apology was not accompanied by an official overturning of the conviction although several people fought for a symbolic clearing of charges. This gesture would have rectified a problematic, albeit legal in the positivist understanding of jurisprudence, entanglement of the British law and the tragedy of one of its most important scientist.

But history is never certain and we should be careful using the simple narrative of a haunted genius that committed suicide as such constructions might be misleading. Professor Copeland, a Turing expert who recently spoke at the University of Oxford, argues that it was not necessarily suicide; murder or an accident are equally as possible. For instance, the apple was never analysed to contain traces of cyanide, Turing was said to be quite cheerful in the weeks and months preceeding his death and his mother remembers a cyanide experiment he was conducting.

Copeland summarises: “In a way we have in modern times been recreating the narrative of Turing’s life, and we have recreated him as an unhappy young man who committed suicide. But the evidence is not there.”

Without any doubt, Turing was brilliant, he contributed immensely to the developments that are now so familiar when holding a iPhone, using a notebook and surfing the Internet. Regardless of the circumstances of his death, there lies still a bitter irony in the fact that the scientist who contributed most during the war was criminalised by the same nation he tried to preserve with his service during WW II. However, we should also be cautious. Whenever there is a genius to be made, we are inclined to use these categories of a tragic hero (Turing) or an eccentric genius (as in the case of Einstein), mainly to render the incomprehensible complexity of their work tangible. In Turing’s case, his personal life and work becomes more familiar through the tragedy.

The familiarity of the tragic hero narrative may well help cement his story and achievements in the wider public memory. This is not, in itself, problematic. However, this seems to be the beginning of the creation of a genius narrative that inevitably leads to misunderstandings and simplifications. But it also acknowledges that the forgotten genius has materialised. His legacy will have to be continuously analysed and once the personal tragedy is well known, the actual focus on his work and achievements can begin.

The last sentence of his seminal paper on intelligent machines still holds: “We can only see a short distance ahead, but we can see plenty there that needs to be done.”